Defining Verification Frameworks for AI Responses

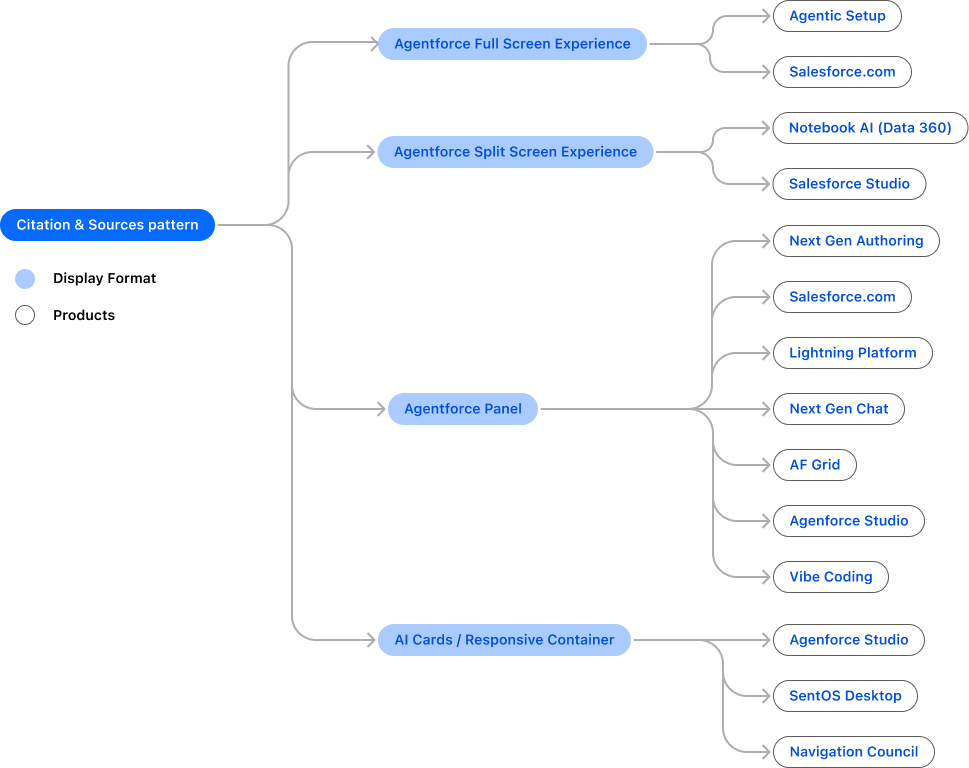

I led the design of the system that defines how AI responses are verified and explained across Salesforce’s Lightning Platform.

This system standardizes how AI outputs are presented, including citations and source attribution, making it foundational across product lines and enabling teams to adopt it at scale.

It establishes a consistent interaction model for AI, ensuring users can understand where answers come from and how reliable they are.

Role

Defined the interaction model for agentic experiences across platform and product surfaces

Established system-level patterns in a zero-PRD, highly ambiguous space

Drove cross-org decisions across Design, Eng, and PM to align on a unified approach

Shaped feasibility and architecture in partnership with engineering (not just design)

Translated strategy into reusable, production-aligned patterns adopted across teams

Set quality bar, governance, and rollout strategy for scalable adoption

Managed stakeholders and expectations at platform + product levels

Challenges

Existing approaches to AI citations broke down at both system and experience levels.

Scaling

Built for a single platform (Lightning), citations did not scale to multi-cloud AI

No shared interaction contract across AI products

Teams created fragmented, product-specific solutions

Legal requirements for source transparency lacked a system-level solution

Experience

Inline citations disrupted the natural reading flow

High citation density reduced comprehension

Expanding source lists pushed primary content off-screen

Low engagement with full source views revealed a breakdown in usability

Strategies

1. Moved citations out of inline text

Inline citations broke reading flow at scale.

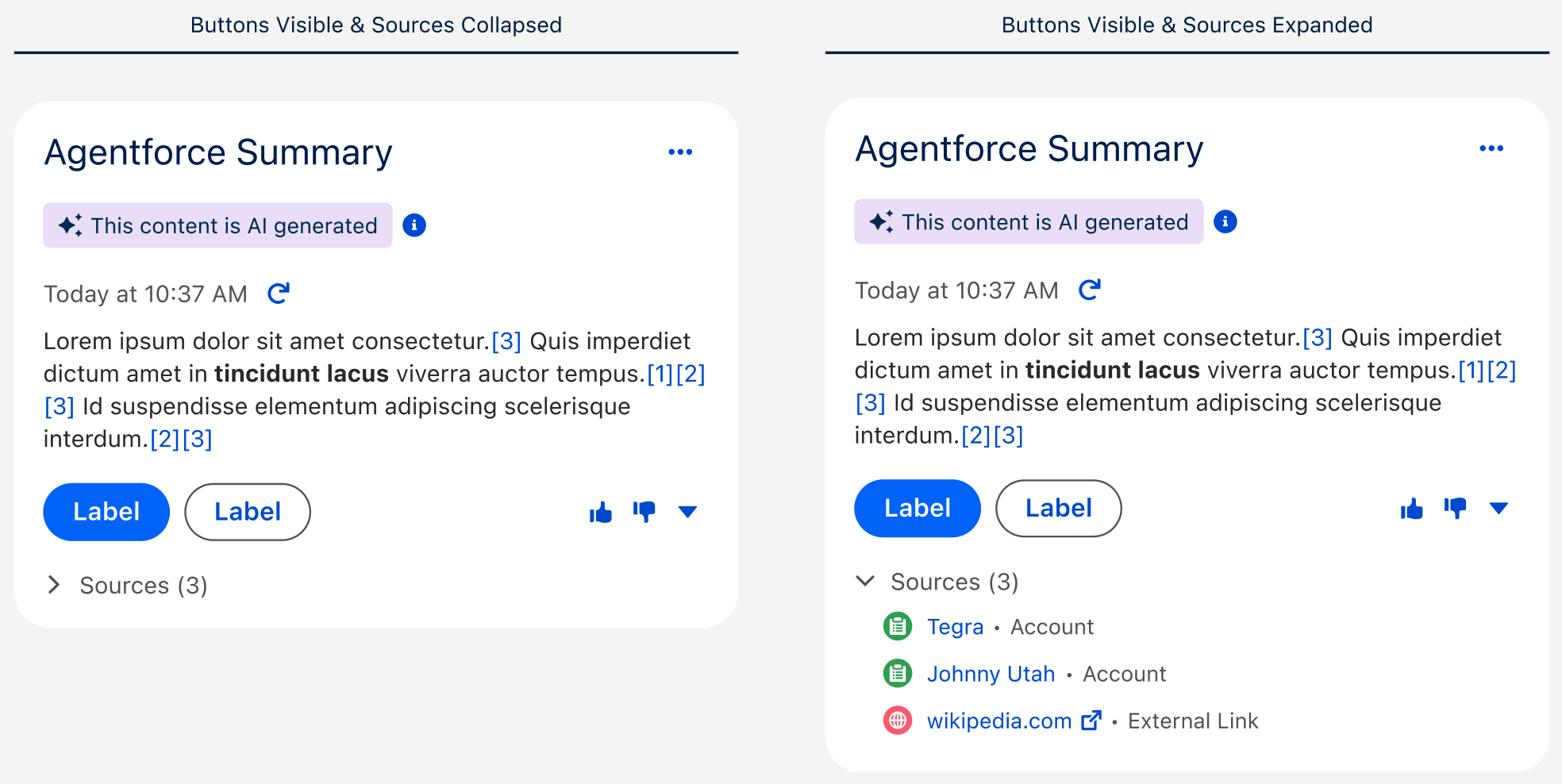

→ Shifted to structured surfaces (popover/panel)

→ Tradeoff: less immediate visibility, better readability

2. Designed patterns for multiple surfaces

AI appears in panels, full pages, and embedded views.

→ Created patterns that adapt across contexts

→ Result: reusable system across products

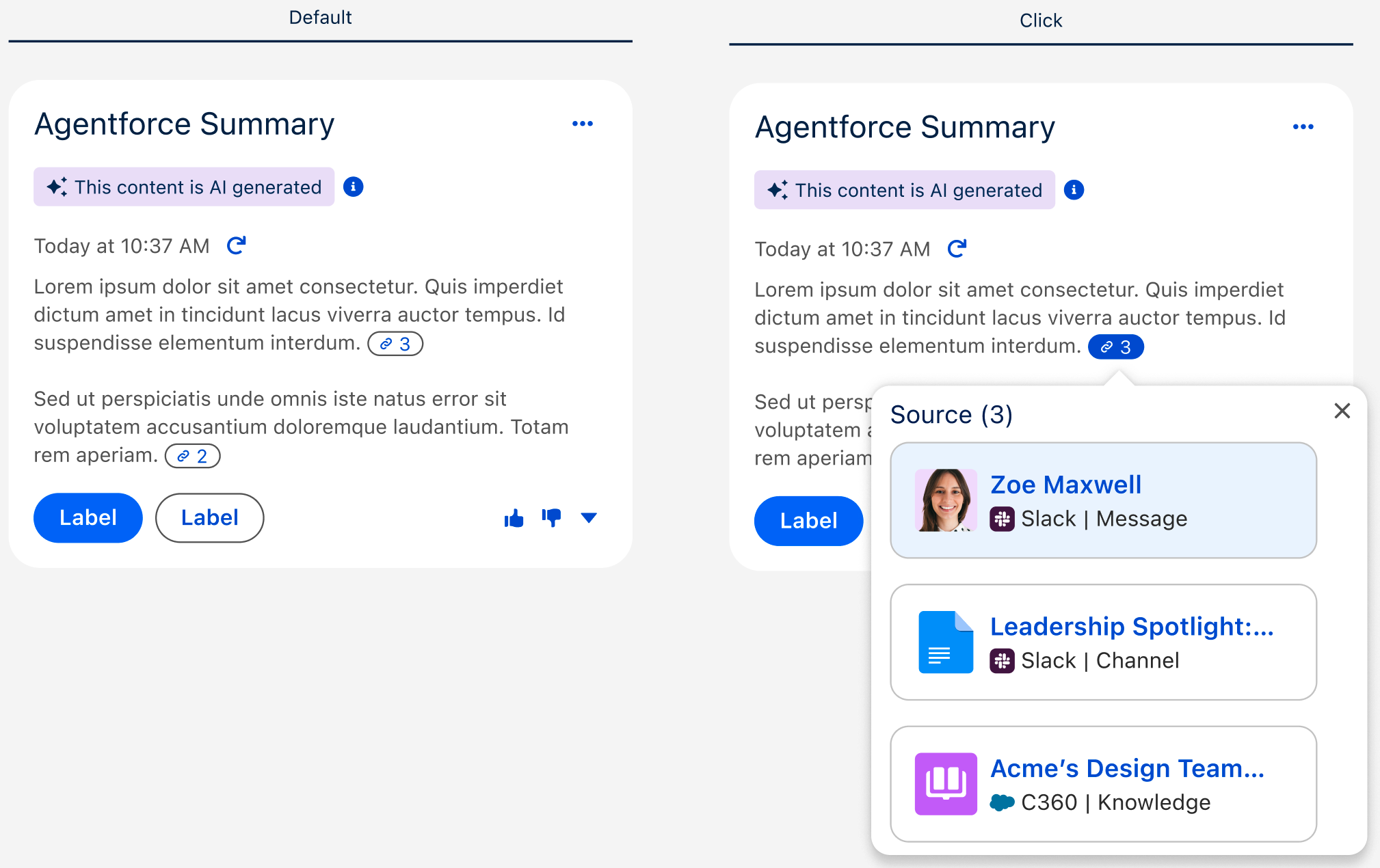

3. Introduced citation markers

Connected specific content to its source

→ Result: clearer traceability of AI responses

4. Enabled flexible implementation

Teams had different technical constraints

→ Introduced optional panel-based source views

→ Result: easier adoption across teams

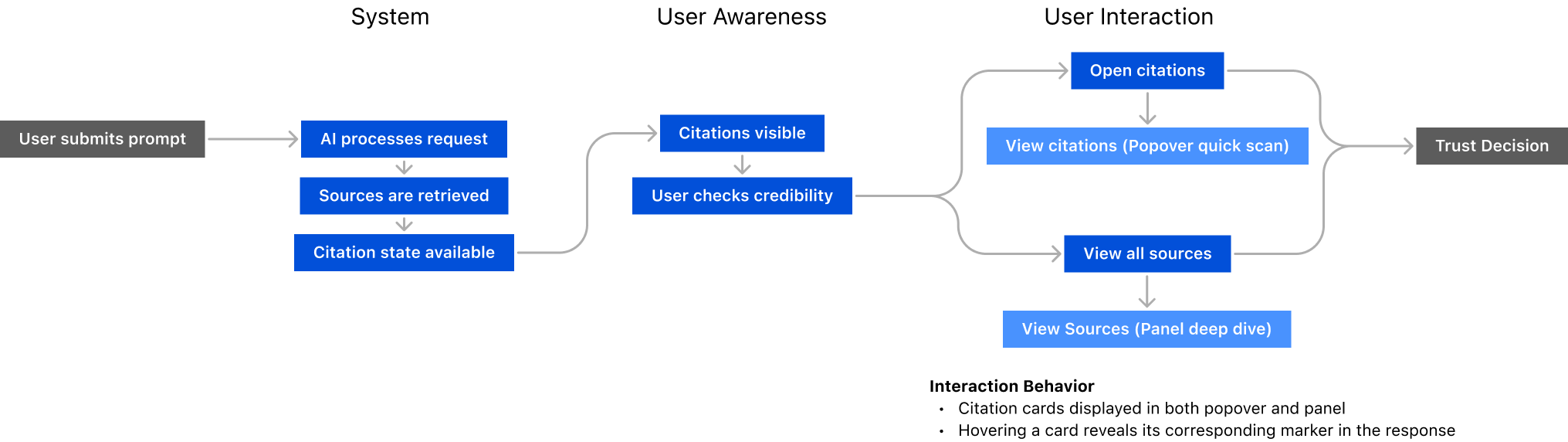

User Flow: progressive trust verification system

Impact

Scaled across 14 product lines, replacing fragmented, one-off implementations

Established a unified approach to how AI responses are handled across products

Made source transparency a core part of AI interaction design

Accelerated delivery of AI experiences through reusable interaction patterns

Design

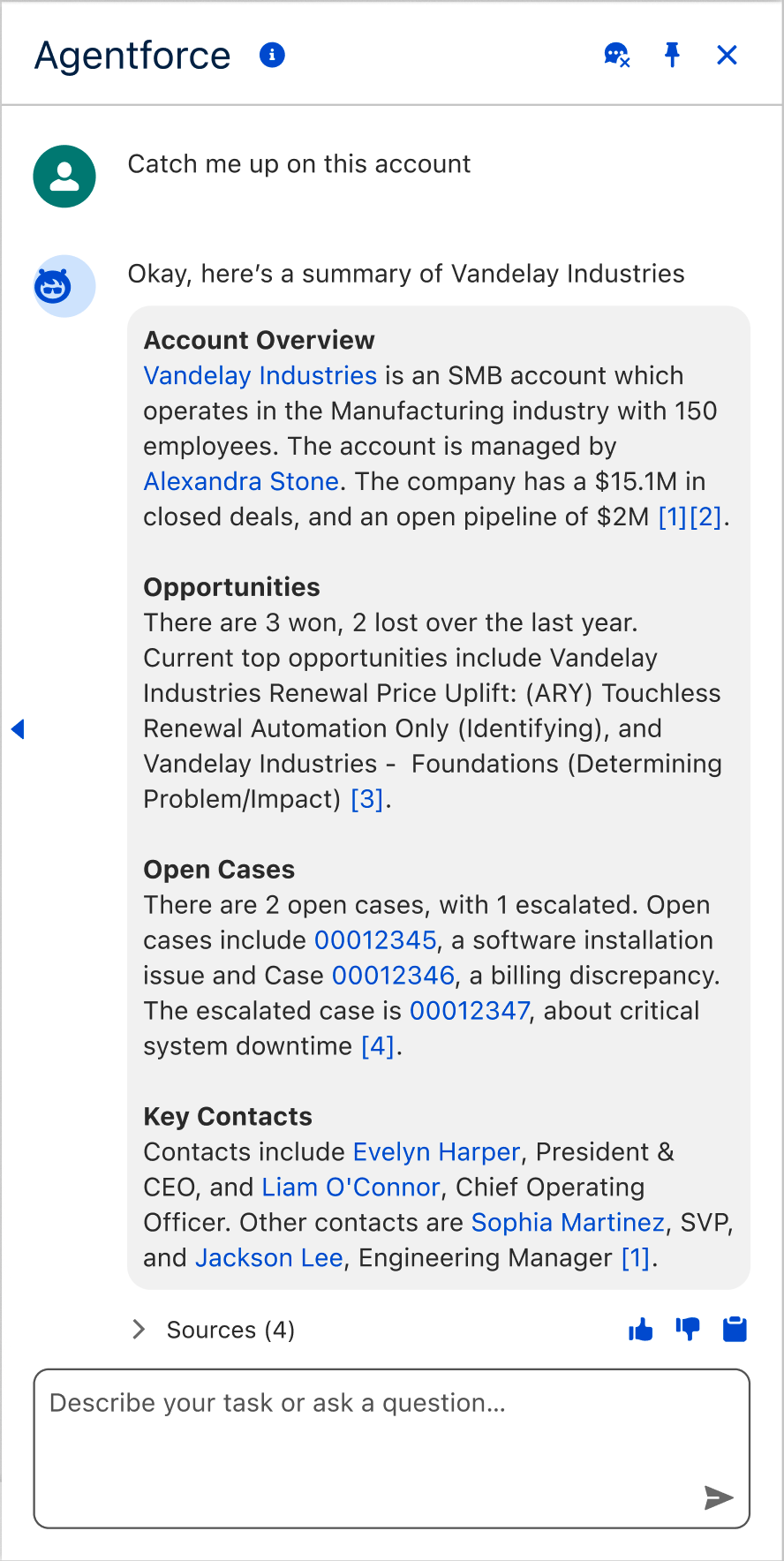

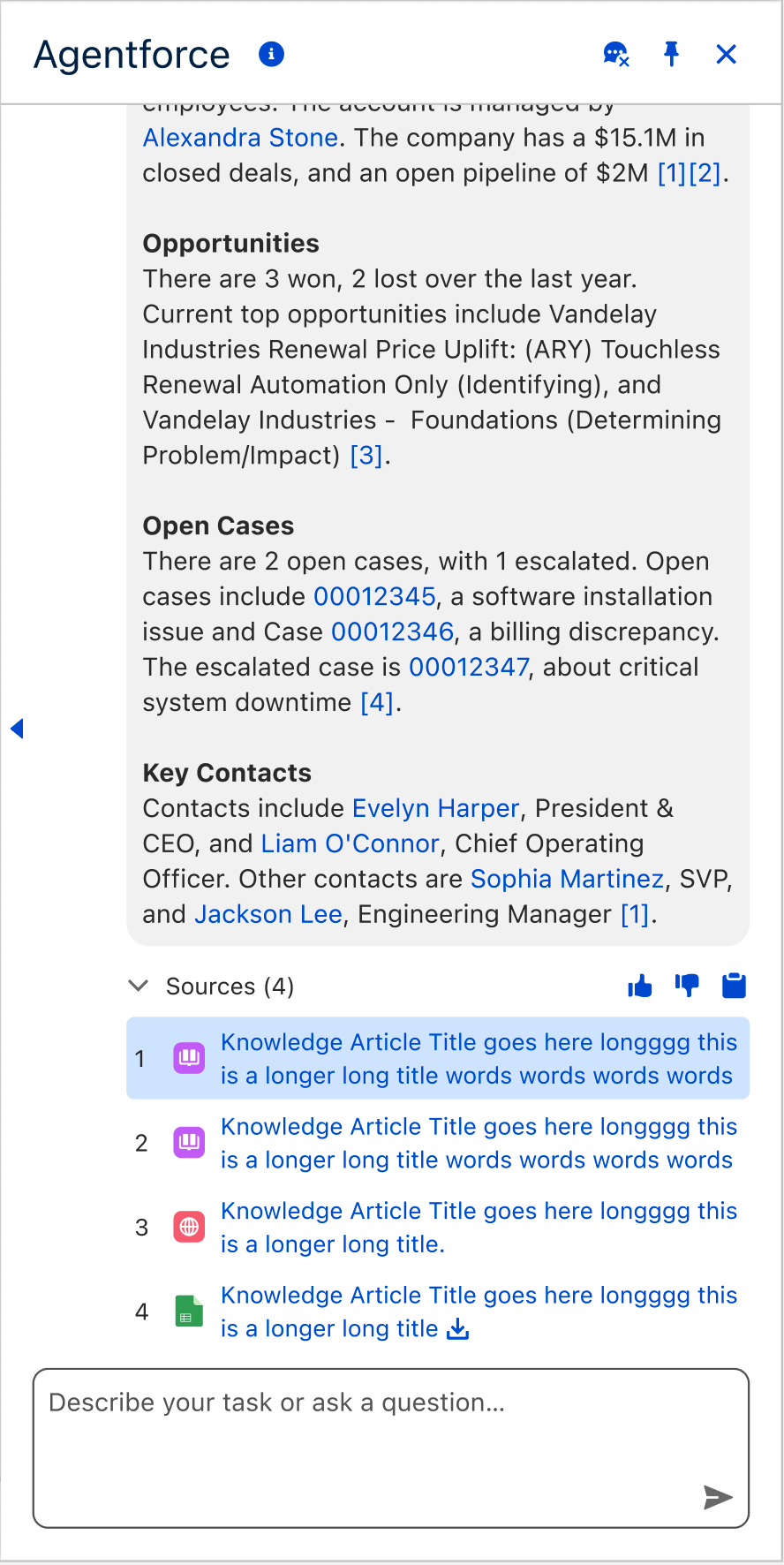

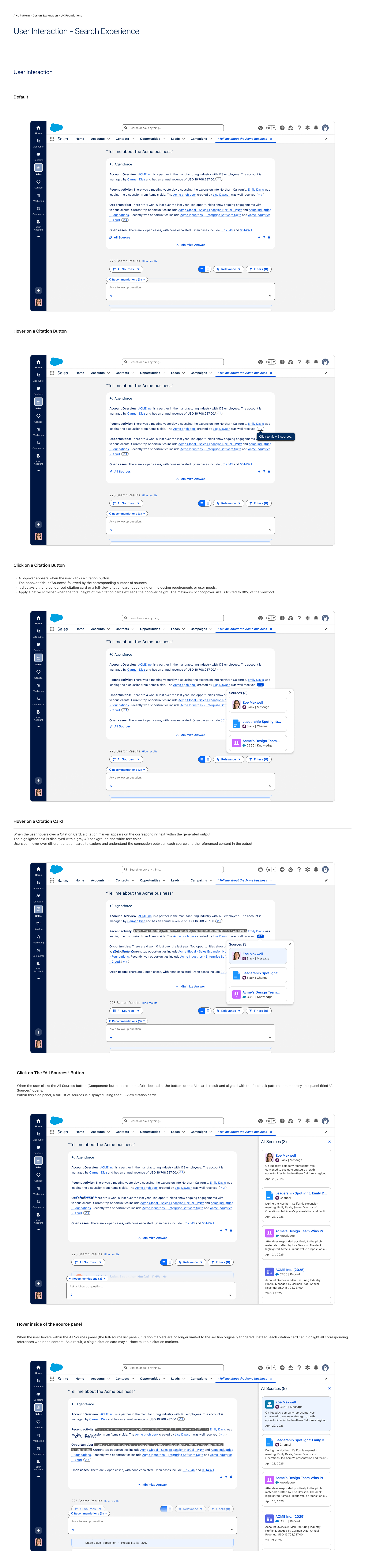

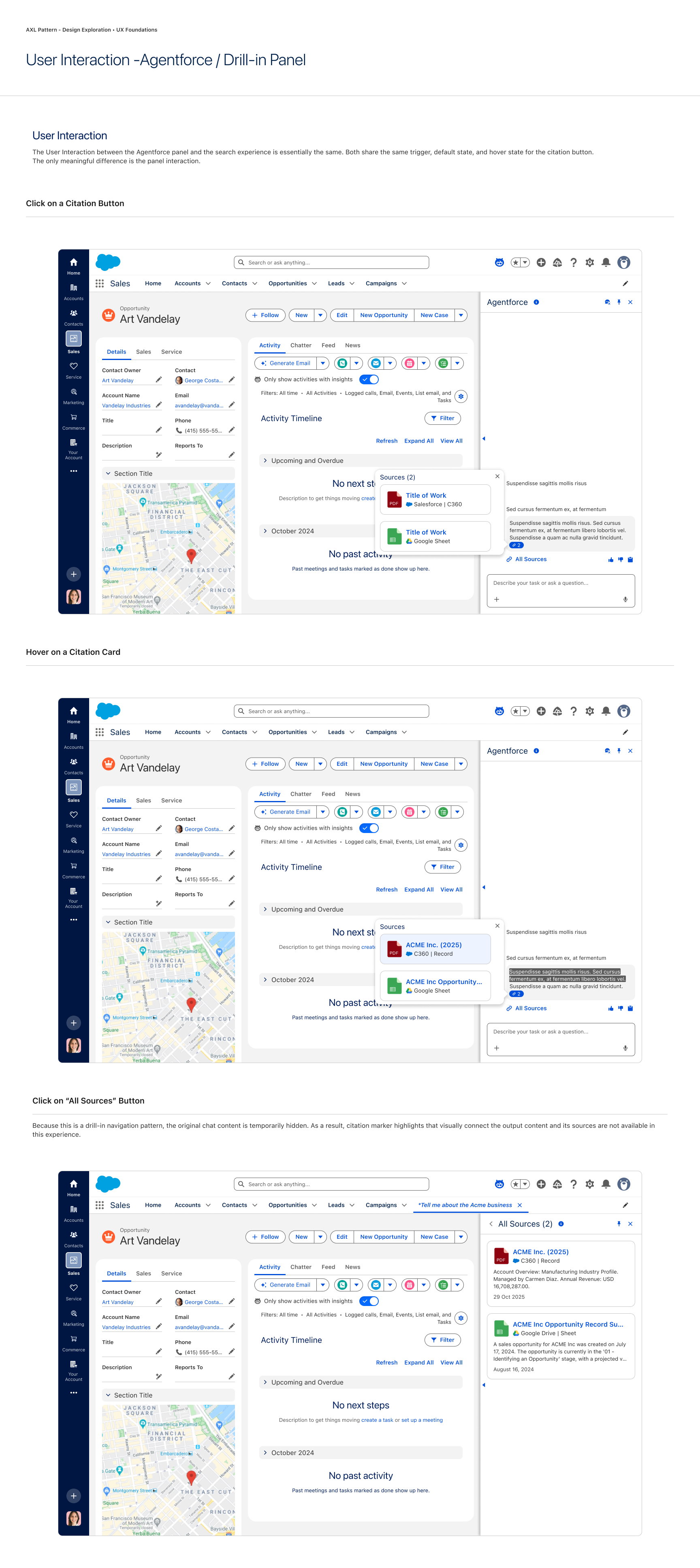

Interaction

Contextual View (Popover) Triggered by clicking an in-text citation.

Global View (Local Panel) Triggered by the footer/main action button.

Global View (Agentforce Panel) Triggered by the footer/main action button.

AI Search Experience

Agentforce Panel Experience

Before and after (AI Card)

The new design improves readability by removing inline citation disruption and shifting source access to a popover. This also eliminates layout shifts—keeping the AI card height stable and preventing content from being pushed down on interaction.

Before

After